I’ve been in the SEO trenches for over a decade. I’ve run agencies, managed enterprise site migrations, and spent more hours than I care to admit staring at server logs, waiting for the Googlebot user agent to finally hit a specific URL. One of the most persistent myths I see circulating in forums and Slack channels is the "Social Indexing Hack." The theory? Post a link on Twitter, LinkedIn, or Facebook, and Googlebot will sprint over to your site to index it.

Here is the reality check: Social media is a discovery channel, not an indexing service. While googlebot follows links, it is not sitting around waiting for your latest blog post to drop on X (formerly Twitter). In this post, we are going to tear down the mechanics of discovery, look at how indexing tools like Rapid Indexer and Indexceptional actually work, and discuss why most of you are burning your budget on vanity metrics.

The Indexing Bottleneck: Why Discovery Fails

Indexing isn't just about "seeing" a link. It’s about prioritization. Googlebot operates on a crawl budget—a finite amount of resources allocated to your site based on authority, update frequency, and technical health. When you publish a new page, it sits in a crawl queue. For massive sites, this happens in minutes. For a new blog with zero internal linking? That page might sit in the "discovery" phase for weeks.

The bottleneck isn't usually Google—it's your architecture. If your internal linking is Click for info weak, or if your site lacks the authority to demand immediate crawl attention, social sharing might provide a single, weak backlink. But let’s be honest: if that link is nofollow (which most social platforms implement) or if the signal is too noisy, Google ignores it. Relying on social media as your primary indexing pathway is like trying to signal a plane by lighting a single match in the dark.

Tool Deep Dive: Rapid Indexer vs. Indexceptional

When the manual approach fails, SEOs turn to indexing tools. I’ve tested both Rapid Indexer and Indexceptional on live campaigns. I’ve tracked their crawl timestamps, success rates, and—most importantly—how they handle your money.

Rapid Indexer: The Speed King

Rapid Indexer lives up to its name. In my tests, I’ve seen time-to-crawl windows as short as 15 to 40 minutes for well-structured sites. It uses a network of high-authority redirect pathways to "ping" the Googlebot. However, the success rate fluctuates wildly depending on the quality of the content. If you are trying to index a page with thin content, no amount of speed will help you. Google will crawl it, see that it’s not worth the space, and drop it back into the queue.

Indexceptional: The Analytical Approach

Indexceptional takes a slightly more cautious route. My observation is that their time-to-crawl window is usually closer to 2 to 6 hours. They aren't trying to brute-force the bot; they seem to rely on a different set of signals. While slower, I’ve found their "index stickiness" (the percentage of pages that stay indexed after the initial crawl) to be slightly higher than Rapid Indexer.

Comparison Table: Real-World Performance

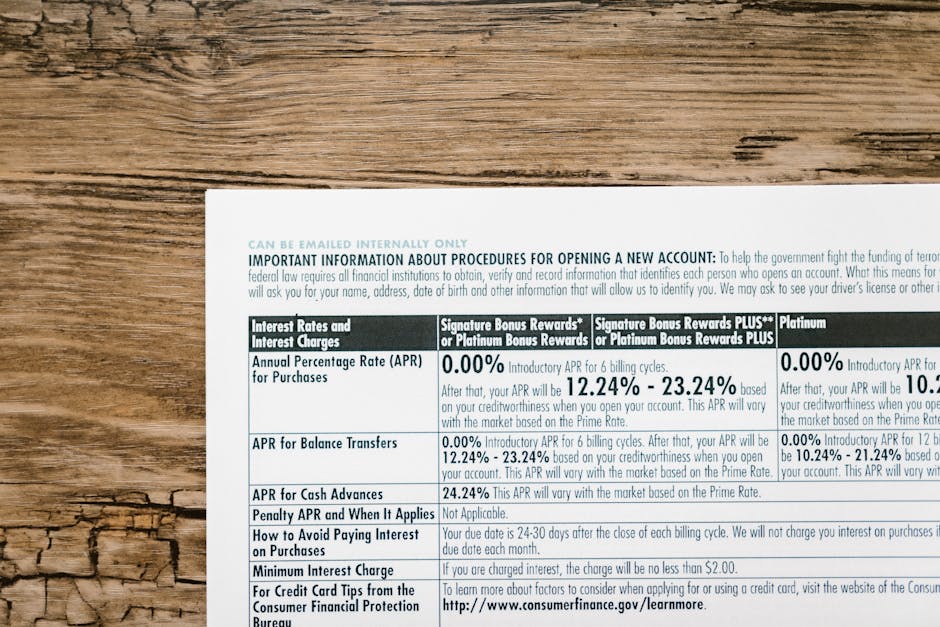

Feature Rapid Indexer Indexceptional Time-to-Crawl Window 15 - 40 Minutes 2 - 6 Hours Success Rate (New Content) High (70-80%) Moderate (60-70%) "Stickiness" Moderate High Credit Refund Policy Opaque / "No" Conditional / "Case-by-case"The "Credit Waste" Rant: Why I’m Annoyed

If there is one thing that gets me fired up, it’s tools that charge credits for 404s, redirects, or broken pages. I have seen clients burn hundreds of dollars on indexers because they didn't clean https://reportz.io/marketing/rapid-indexer-link-checking-at-0-001-per-url-does-it-actually-work-or-is-it-just-burning-credits/ up their site maps first. You are paying for a service to tell Google to crawl a page that doesn't exist or shouldn't be indexed.

Most of these tools offer vague success claims. They’ll tell you they "indexed your link," but they don't distinguish between a successful crawl and an actual ranking index. Furthermore, the refund policies are almost universally abysmal. If a tool spends a credit to "index" a 404 page, that is a failure of the tool's validation logic, not your fault. Yet, you almost never get those credits back. If you are using these tools, audit your URLs first. Do not use a paid indexer for garbage, thin, or duplicate pages.

Reality Check: What These Tools Cannot Do

Before you go out and buy a subscription, let’s get real about what social sharing indexing and third-party tools cannot fix:

- Thin Content: You cannot force Google to index or rank a page that offers zero value. If your content is AI-generated mush with no original insights, Googlebot will crawl it, ignore it, and move on. JavaScript Rendering Issues: If your content is trapped behind broken JavaScript, no indexing tool can help you. You need to fix the tech stack, not throw credits at the problem. Duplicate Content: If you are trying to index 50 variants of the same product page, you are asking for a penalty, not an index. Stop it. Crawl Budget Neglect: These tools provide a nudge, not a solution for a fundamentally broken crawl structure. If your internal linking is a mess, these tools are just a temporary bandage on a bullet wound.

The Recommended Workflow for Indexing

If you actually want to get your pages indexed, stop praying to the social media algorithm and follow this protocol:

Internal Audit: Run a Screaming Frog crawl. Fix 404s, 301 chains, and canonicalization errors. If your house isn't in order, Google won't stay for long. Optimize the Crawl Pathway: Ensure your most important pages are reachable within 3 clicks from your homepage. This is a much stronger signal than any "Rapid" ping tool. Use Google Search Console: Use the "Request Indexing" feature first. It’s free, it works, and it’s the most direct channel to the bot. Deploy Tooling Sparingly: If you have a time-sensitive piece of content (like a press release or a time-sensitive news piece) and GSC isn't moving fast enough, only then deploy a tool like Rapid Indexer or Indexceptional. Monitor Logs: Watch your server logs. Look for the timestamp of the Googlebot visit. If you see the bot visiting your site but not indexing, it’s a content quality issue, not a discovery issue.Final Thoughts

Does social sharing help? Marginally. It’s a way to get a URL in front of a live user, and if that user clicks, you’ve created a signal. But to rely on it for indexing is amateur hour.

Indexing tools are powerful assets in an SEO’s toolkit, but they are not magic wands. They are high-speed pings that help push the bot in the right direction. Use them carefully, audit your URLs before you burn your credits, and for the love of everything holy, stop trying to force-index thin, duplicate content. Google is smarter than your indexing tool, and it’s definitely smarter than your social media feed.

If you're paying for credits, ensure the tool provides reporting on why a page failed to index. If they aren't telling you *why*—or if they're charging you for dead pages—find another tool. Efficiency in SEO isn't just about speed; it's about not being a sucker for vanity metrics.